AI is starting to change how investors judge capital raisers.

Not because investors suddenly “love AI.”

And not because AI makes deals better.

But because AI makes it easier than ever to produce polished materials that don’t match reality. So, investors are adapting by tightening scrutiny earlier.

That’s the trust gap: when output quality rises faster than underlying judgment.

In our latest Avestor leadership webinar, Badri Malynur professional materials can be generated in a matter of hours or even minutes and Richard Wilson kept coming back to a practical point: AI can be real leverage, but only when sponsors are clear about what AI can’t do.

This blog breaks down what’s actually happening, why investors are reacting the way they are, and how capital raisers can use AI without quietly eroding credibility.

The Shift Investors Won’t Say Out Loud

A few years ago, “professional materials” were a signal of competence.

Now, investors know professional materials can be generated in an afternoon.

That’s not paranoia. It’s the new baseline. In the 2025 Edelman Trust Barometer, a majority of respondents said they worry about innovation being poorly managed and, voiced concerns around misinformation and lack of trust.

At the same time, AI adoption inside finance workflows is accelerating. A 2025 banking industry survey (Temenos / Hanover Research, as covered by ABA Banking Journal) reported widespread momentum around generative AI use cases and deployment intent across banks.

When the tools scale faster than the standards, investors do what they always do: they raise the bar on verification.

This is why diligence is creeping earlier, not because investors want to slow down, but because they’re trying to protect decision quality.

Want to build scalable capital raising systems that earn investor trust regardless of technology? Explore Avestor’s 6 Pillars of Growth.

Where The Trust Gap Shows Up in Real Capital Raising

Investors rarely say, “We don’t trust this because it sounds AI-generated.”

They say things like:

“Can you walk me through how you got this number?”

“What’s the source for that claim?”

“Who’s accountable for this language?”

“What happens if reality deviates from the model?”

The trust gap shows up when AI makes something sound clear, but the operator can’t defend it under pressure.

In the webinar, Avestor’s Co-founder Badri Malynur framed it in a way that landed: AI doesn’t replace judgment; it amplifies it. If the underlying judgment is strong, AI can compress time and improve preparation. If judgment is weak, AI makes weak thinking look confident.

Richard Wilson, the CEO of Family Office Club, pushed the same theme from a different angle: the market is entering an era where “looking professional” is no longer proof. What matters is whether the sponsor can explain the work behind the output.

That’s the trust gap in one sentence:

investors are reacting to “clarity without proof.

We unpack this distinction in more detail in the full leadership session, including live Q&A and practical examples from active fund managers.

The Three Most Common “AI Credibility Leaks”

These are patterns we’re seeing across private market workflows, not theoretical risks, but practical points where trust quietly breaks.

1) “Polished” Language That Changes Meaning

AI is great at rewriting.

That’s exactly why it’s dangerous around anything investor-facing that carries legal, compliance, or fiduciary weight.

The risk isn’t that AI produces nonsense.

The risk is that it produces language that reads better while subtly shifting meaning, especially around:

- investor rights and obligations

- distribution mechanics

- fees and expense allocation

- risk language and forward-looking statements

- who decides what, when, and how

This is where Badri’s point matters: AI can speed up drafts, but it doesn’t absorb responsibility.

The accountability stays with the sponsor.

2) Confident Answers That Collapse In Q&A

AI makes it easy to produce a fast response to an investor's question.

But investors don’t evaluate you by your first answer.

They evaluate you by what happens when they push.

If your process becomes “AI → send,” you’ll eventually hit the moment where an LP asks a second-order question and the logic breaks.

That’s not an AI failure.

That’s a workflow failure.

The solution isn’t to avoid AI; it’s to treat AI as prep, not authority.

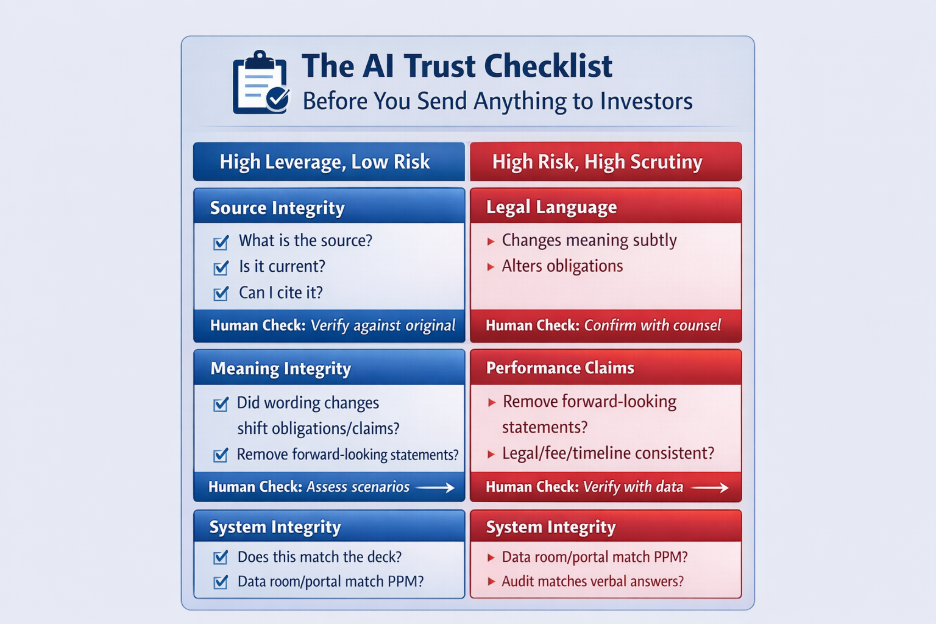

3) Inconsistent “Systems” Across Assets

One of the most underrated trust signals in private markets is consistency.

Investors don’t need rigidity.

They need repeatability: a clear sense of how decisions scale across deals, raises, and reporting cycles.

AI can accidentally create inconsistency at scale:

- Your deck says one thing

- Your memo says another

- Your portal language differs from your PPM

- Your follow-up emails introduce new claims or timelines

When investors see inconsistency, they don’t label it “AI risk.”

They label it operator risk.

What Disciplined AI Usage Actually Looks Like

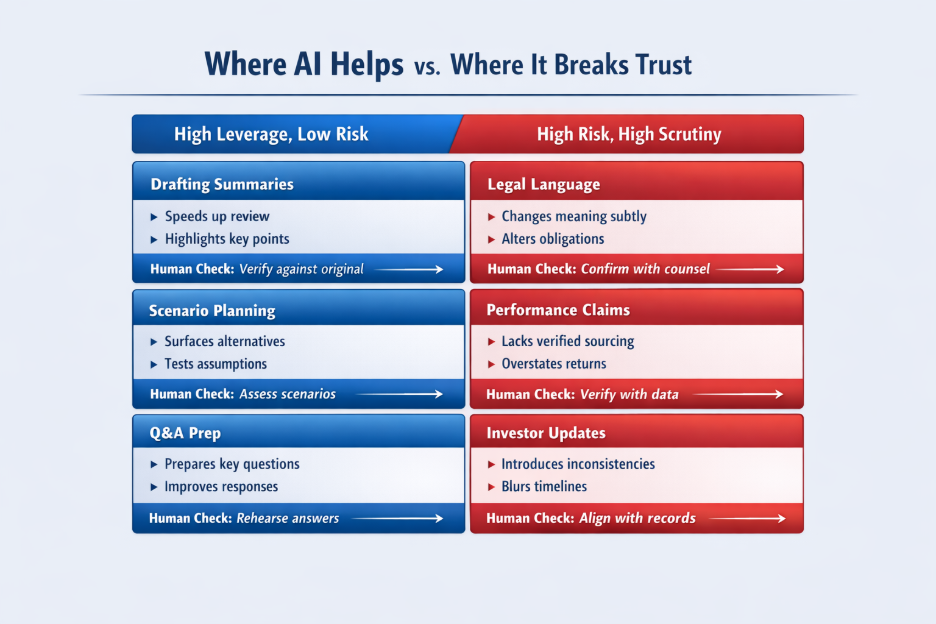

In the webinar, both Badri and Richard were clear: the goal isn’t to “use more AI.” It’s to use AI in places where it improves thinking without replacing judgment.

Here’s the operating model that tends to hold up:

Use AI for:

- Pressure-testing assumptions before LP meetings

- Summarizing documents so you can review faster (then verify)

- Drafting internal outlines and first passes (then human-check)

- Scenario planning prompts to surface blind spots

- Q&A rehearsal (“what would an LP challenge here?”)

Don’t use AI as:

- Final legal language

- Final compliance interpretation

- Final performance claims or sourcing

- A substitute for counsel, verification, or accountability

The best capital raisers aren’t using AI to sound smarter.

They’re using it to arrive smarter before the conversation.

That’s how trust is built: not by faster output, but by stronger preparation.

Learn how leading fund managers treat AI as a strategic assistant, not a replacement for judgment.

Why This Matters More in 2026 Than It Did in 2024

As AI becomes universal, differentiation shifts.

Soon, everyone will be able to produce:

- A clean deck

- A solid memo

- A well-written investor update

So, investors will differentiate based on what AI can’t mass-produce:

- Operator clarity

- Repeatable process

- Defensible assumptions

- Consistent investor experience

- Accountable decision-making

Or said another way:

AI raises the floor. It also raises the standard.

AI isn’t the enemy of trust.

Unexamined AI workflows are.

The capital raisers who will outperform in the next cycle won’t be the ones using the most AI. They’ll be the ones who know exactly where AI’s role should stop, and where human judgment must begin.

If you’re evaluating how AI fits into your capital raising process and want to ensure your infrastructure strengthens investor trust rather than weakens it, let’s talk!

👉 Book A Strategy Call With Our Team

We’ll walk through how disciplined operators are using AI inside structured, investor-ready systems, without introducing unnecessary risk.